If you’ve ever happened to talk to anyone that understands anything about digital lighting, you might have come across the term Ray-Tracing. It’s a technology that’s considered the holy grail in computer-generated graphics – the ability to send millions of rays into a scene and have them return accurate data on objects they bounce off of, which can help calculate real lighting reproductions that get close to (the closest we’ve been yet) to replicating real lighting. It’s technology that has been used in films for years to help create lifelike CGI sequences but requires a massive amount of computational power to achieve. Having it work in real-time? Impossible.

Or, at the very least, improbable up until now. Ray-Tracing is a term Nvidia hopes you’re familiar with, because it’s the biggest new feature in their RTX line of 20-series graphics cards. The ability for real-time Ray-Tracing in video games, opening a new frontier for visual fidelity and accurate light sources, all of which have been faked incredibly well up until now. The RTX 2080 Ti is the most powerful of the three card line-up, but it turns out it’s not ray-tracing that makes it alluring in terms of performance. It’s simply the fastest card on the market right now, achieving 4K/60FPS in most modern titles without breaking a sweat. The problem is that you’re going to have to pay an exorbitant amount for that pleasure, while missing out on its most advertised features almost entirely.

At the core of Nvidia’s new architecture, dubbed Turing, are two new types of processing cores – RT and Tensor. The RT core, of which the RTX 2080 Ti has 68, is responsible for the computationally intensive process of ray-tracing. This means obtaining data from objects that don’t even render in a scene, which was usually impossible thanks to an optimization technique known as fulcrum culling (the screen only rendering objects within your view, to save resources). Tensor cores, by comparison, handle machine-learning techniques that are making an appearance on gaming cards for the first time. That might sound odd, but they’re put to good use for some cutting-edge anti-aliasing techniques. One such technique is called Deep-Learning Super Sampling, which using machine learning to combine images for higher resolution outputs and shaper anti-aliasing, with a significant performance gain.

This specific Gigabyte model features a pristine black backplate that encloses the massive heatsinks on the front of the card, rounded out by a triple fan design that keeps things cool under load. The fans themselves will dynamically spin up independently based on load, which keeps things quiet when idle and relatively taxed. But when you’re really pushing this card, you’ll know it. The Gigabyte design generates an immense amount of noise under stress, which will pierce through your headphone if you’re in close proximity. It’s a far cry from the quiet design of the Founder’s Edition model, which you’ll unsurprisingly need to pay a premium for.

The real problem right now isn’t noise though, its support. Windows 10 updated only last week to support ray-tracing at all, and on the market today you can find exactly one game that uses it: Shadow of the Tomb Raider. Nvidia has touted titles in the future that will capitalize on ray-tracing, such as Battlefield V next month and Metro: Exodus next February, but they’re essentially asking you to take them at their word on how game-changing it might be. Battlefield V, for example, might offer you a competitive advantage by allowing for the real-time reflection of foes behind you, for example. But according to reports from Gamescom (since none of this can actually be tested yet), that comes at a price. The sort that forces you to downscale to 1080p, and hope for 60FPS at some times. Not what you’d exactly want for a card this expensive.

DLSS is another issue though. Final Fantasy XV features a benchmarking tool that shows the difference between this machine-learning approach as opposed to Nvidia’s previous TSAA attempt, and the results are staggering. With DLSS enabled, the benchmark receives top marks, with a silky smooth presentation and extremely sharp anti-aliasing. TSAA delivers a comparable (and arguably, marginally better quality performance), but it drastically impacts performance to the point where the benchmark rests on just an average score. DLSS, like ray-tracing, needs more support too, but at least its effects are far more front-facing and useful if you’re looking to increase performance drastically.

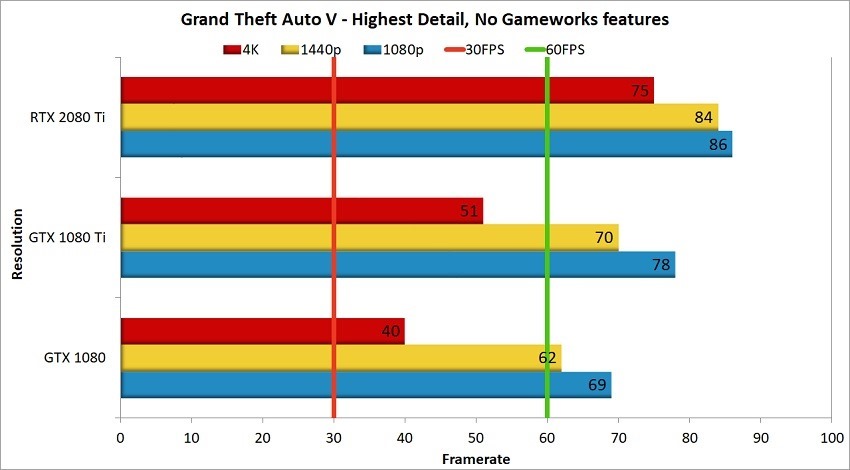

So if ray-tracing is hardly supported right now and DLSS still needs a proper rollout, what’s the point of a new line of cards anyway? Well, Turing offers its own improvements in terms of traditional rasterization and raw output too, which makes it an alluring proposition for those looking for the best possible 4K performance possible right now. Going through the same benchmarks and titles we tested the previous table topper on, the GTX 1080 Ti, it’s clear that the 2080 Ti is a clear winner. It’s not the leap that Pascal offered over Maxwell, but it’s certainly delivering on the promises of 60FPS locks at the highest settings at 4K across the board.

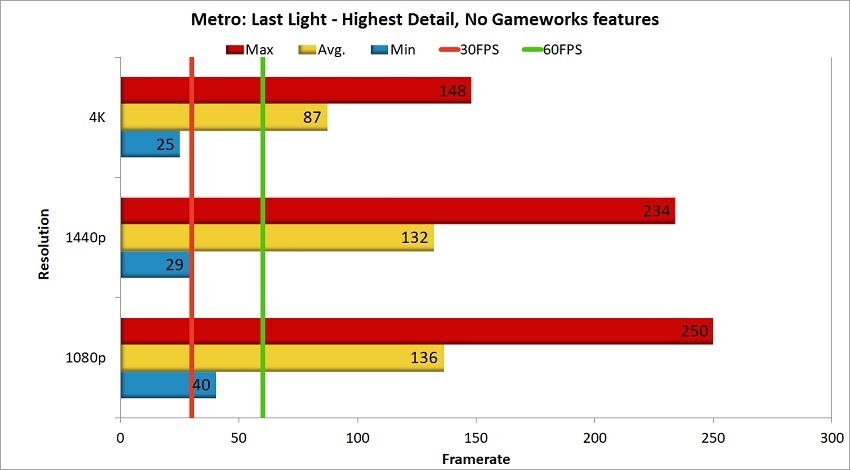

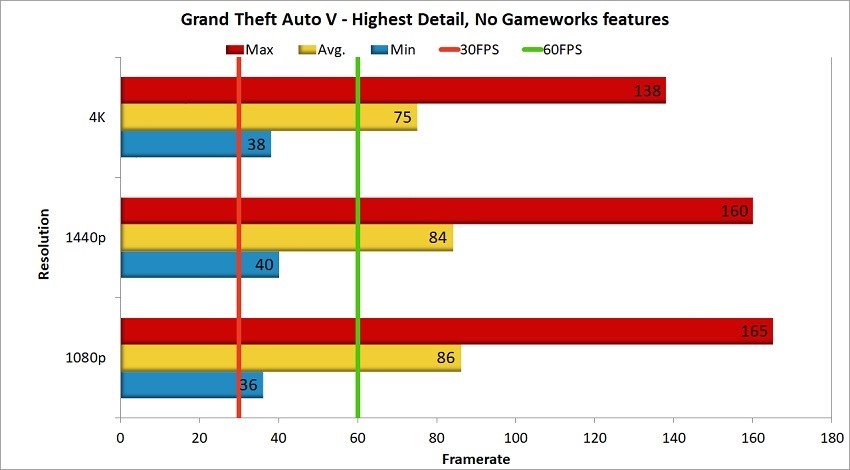

That’s evident in Metro: Last Light, GTA V and more. Rendering at 4K was really no sweat for this new Turing architecture, and it’s likely that nothing with really push it past that for some time. The 2080 Ti is the ultimate future-proofing you could ask for right now if you’re just concerned with high frame rates and resolutions, and less about what another generation of RTX cards might bring for newer rendering features.

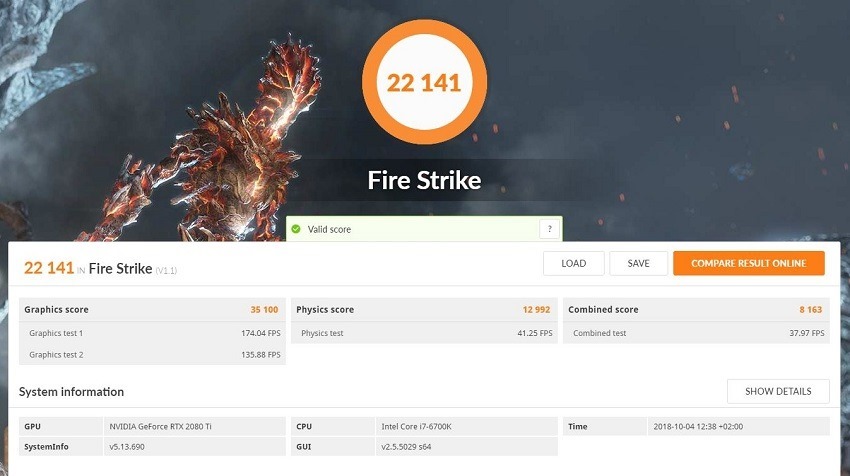

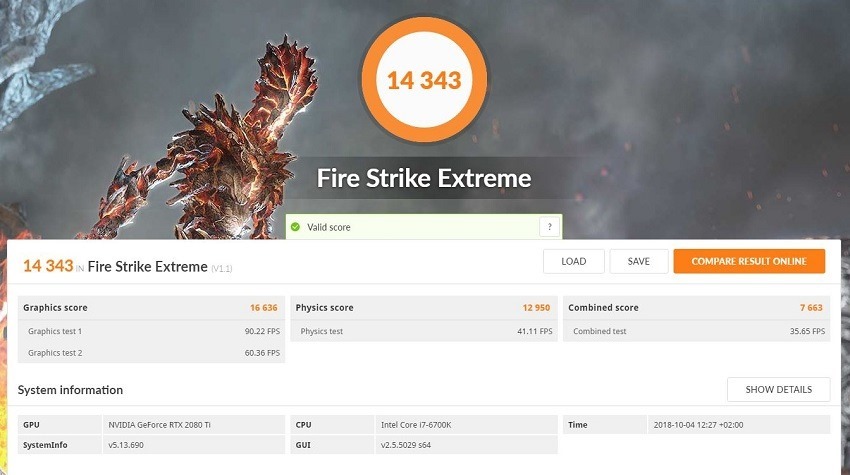

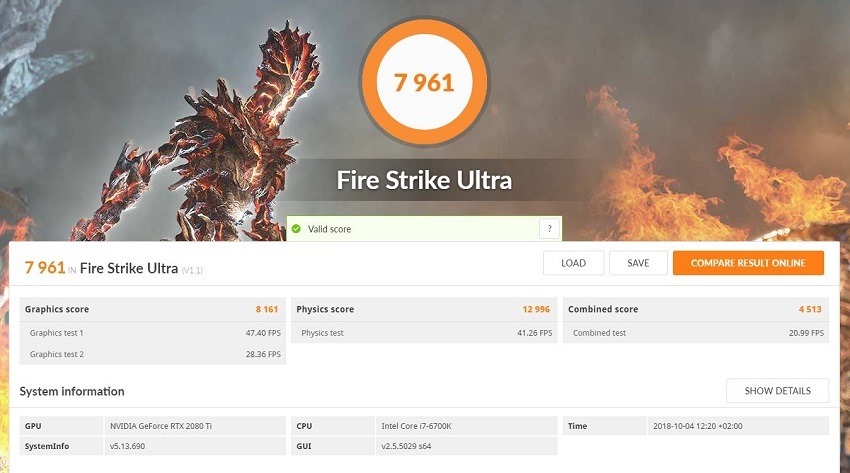

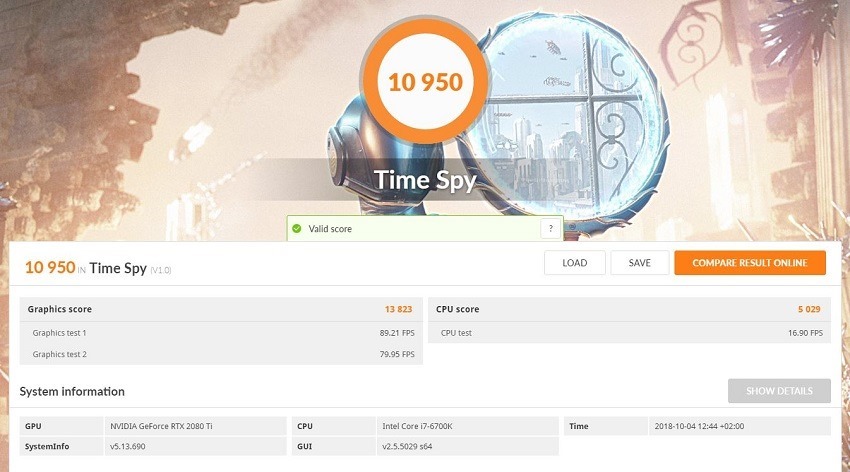

Looking at 3DMark’s multiple tests, it’s also clear that looking at anything below 4K seems a bit pointless for a card like this. Purchasing an RTX 2080 Ti really makes no sense, considering it reaches frame rates that even the fastest monitors on the market are capable of keeping up with. It is somewhat alluring at 1440p if you’re aiming to hit 144Hz at this middle-ground resolution. It’s an extremely pricey route to get there and requires additional hardware (such as a suitable monitor, for example) to really capitalise on, but it sticks with a future-proofing angle still.

That future-proofing comes at a hefty cost though. Right now the RTX 2080 Ti has astronomical pricing locally, and you’ll likely be looking to spend at least R25,000 on this specific Gigabyte card. In comparison to the last cream of the crop, the GTX 1080 Ti, you can grab the same Gigabyte branded variant for just over R11,000. That means you could get two of those for less than a single RTX 2080 Ti, struggle a little with SLI integration but still achieve much better results. At the cost of DLSS and Ray-Tracing features, when they start becoming more commonplace.

And that’s why the entire RTX line feels a little underwhelming right now. Yes, they’re phenomenal cards that are blisteringly fast, but a lot of what you’re paying for comes down to the two new types of cores that are sitting unused by most graphical applications right now. Nvidia is really hoping you take a bet (and expensive one at that) on the future with these cards, with the 2080 Ti being the biggest of them all. There’s no telling just how fast adoption of these new techniques will take, and even how well they will perform when they eventually arrive.

Waiting on your card to reach its natural potential also means that you’re running the risk of paying a premium now, and having better RTX cards release down the line with more power, more capabilities and a healthier market to leverage it fully at the time. There’s simply no reason to invest in RTX architecture right now, despite it being phenomenally fascinating technology that certainly will change the landscape of who games are made in the future. If you simply must have the best card for 4K gaming on the market right now, there’s no question that the 2080 Ti is it. But it’s not the most intelligent purchasing decision you’re going to find at all.

Last Updated: October 10, 2018

| Gigabyte RTX 2080 Ti | |

|

The RTX 2080 Ti is a marvel in engineering that brings forth a new frontier for PC gaming and rendering technologies alike. It’s just not the smartest purchasing decision right now, given that most of its features will lie dormant until products start using them effectively.

|

|

|---|---|

Viper_ZA

October 10, 2018 at 13:39

My watercooled 1080ti will suffice for now, playing at 1440p. Will wait for a considerable price drop, then splurge so I can hit the 144 fps mark just because 😛

G8crasha

October 10, 2018 at 14:03

I’m still sitting with my Gigabyte GeForce GTX 970 G1 Gaming Edition (can you believe, I paid R6300 for it in 2015. Now it’s priced at R8780 and it so outdated! Man, things have gone bad for us economically)!

Unless you are exclusively gaming in 4K, I don’t see any reason for this card yet!

Viper_ZA

October 10, 2018 at 14:06

Yep, the Randela is weak. Curious as to what AMD’s new cards will be like, seeing that Vega was disappointing.

Geoffrey Tim

October 10, 2018 at 15:22

I…don’t see any need to upgrade beyond my 1080. I have a 1080p screen, and everything runs like butter. When RTX 2nd gen cards come along, and most new games support ray tracing, I’ll upgrade then.

Viper_ZA

October 10, 2018 at 15:23

Just wait for the price drop though, maybe 2nd gen cards will launch at the R30k mark? 🙂

Pariah

October 11, 2018 at 09:21

I’ll wait 3 years from now – the other stuff in my box will need an upgrade at that point so it doesn’t make sense for me to buy anything at this stage. My 1060 is giving me everything I need.

G8crasha

October 10, 2018 at 13:58

The biggest bottleneck will be the supporting hardware. The GFX card is all nice and pretty and shiny, and oh so powerful, but if you ain’t sitting with the equally powerful hardware (CPU and sufficient RAM), chances are, the CPU will be a bottleneck. At least that’s what other reviewers have said when they paired these cards with i5s and less!

Viper_ZA

October 10, 2018 at 14:06

Anyone looking to pair a R25k card with an i5 CPU is asking for it lol, hopefully my Ryzen Gen1 will not be a bottleneck 😀

Admiral Chief

October 10, 2018 at 14:07

6/10

Wow

[Cancels pre-order]

.

.

.

[Realises pre-order not possible with wallet.today]

.

.

.

[Cries in 1060]

JWT-80

October 10, 2018 at 14:42

Still loving my 1060 🙂

Pariah

October 11, 2018 at 09:19

Ditto. I don’t have a 4k monitor, so the 1060 is still running everything I can throw at it without a sweat.

Admiral Chief

October 11, 2018 at 11:13

Same here man, no complaints

Yondaime

October 11, 2018 at 12:28

1060 all the way baby

Magoo

October 11, 2018 at 10:26

Seen so many people actually purchasing this. South African people. WTF

Paul Aguilar

October 24, 2018 at 17:16

Must have a really nice crystal ball with RTX shading to know what will replace the 2080ti’s considering it’s only been like a month since release and there isn’t out in the market to even hint at challenging it’s performance and features.