I’ve got an odd skill, that’s essentially dead: I can develop actual film from vintage cameras, process the stuff in a dark room and use groovy chemicals to create physical copies of your treasured memories. It’s an utterly useless ability these days, more pointless than an inflatable dartboard. The Darryn of that timeline, would probably have killed (allegedly again) for the cameras of today, DSLRs which can double as cinematic movie rigs or smartphones which give you decades of experience with the single tap of a button.

That’s where the money is these days, as everyone from Samsung to Apple are touting their flagship devices as the benchmark in mobile photography. That market is about to get a little bit more crowded when the Essential smartphone launches from Android co-founder Andy Rubin. On the surface, it looks pretty decent: An edge to edge display, thin as a wafer and packed with the bleeding edge in hardware.

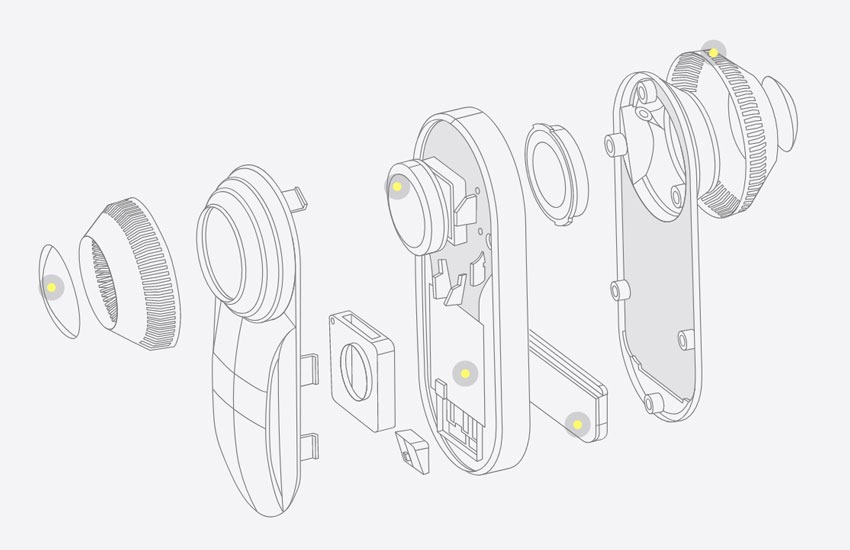

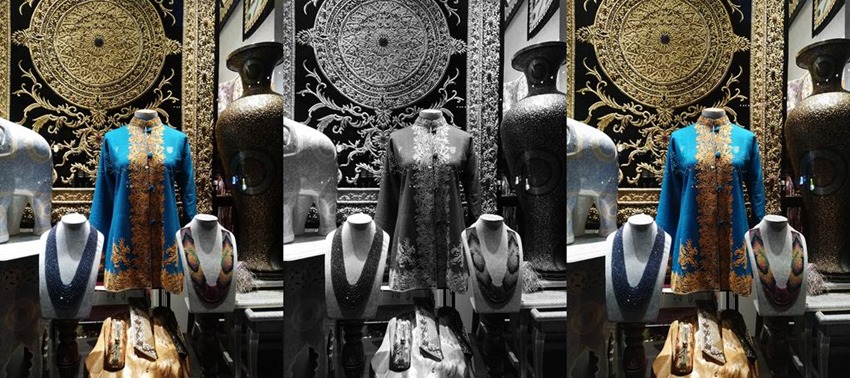

While having a powerhouse flagship is a seller in its own right, the Essential is also banking on it being the absolute best photography machine that you can fit into your pocket. “When taking a still picture, Essential Phone activates both cameras at once. The monochrome and color images are then fused to create a final photograph with rich, deep clarity,” Essential’s imaging expert Yazhu Ling wrote in a blog post.

[quotes quotes_style=”bquotes”]We were not willing to sacrifice image-quality in low light which is a common point of frustration for many people who rely on their phone’s camera. In a nifty bit of engineering we were able to accomplish both those goals.[/quotes]

While Huawei and Motorola have used that exact same technique in their devices, the Essential’s take on that method appears to have produced a sharper and cleaner image if you can believe the photos shown so far. This all ties in with what Ling calls subjective tuning, a process which allows the Essential’s Image Signal Processing software fine-tune itself in any situation and take the “right picture in millions of different scenarios.”

[quotes quotes_style=”bquotes”]

“Our subjective tuning process began in January 2017, and during that time, we have gone through 15 major tuning iterations, along with countless smaller tuning patches and bug fixes,” Ling wrote.

[/quotes]

[quotes quotes_style=”bquotes”]

We have captured and reviewed more than 20,000 pictures and videos, and are adding more of them to our database every day. The key to subjective tuning is capturing all types of pictures in the wild, identifying systematic image quality problems, and adjusting the ISP setting to address them. In order to address an image problem, however, we have to analyse multiple pictures to determine the root cause. Let’s say one of the captured images looked soft; our thought process goes something like this: Is that softness a result of focus failure or camera shake?

Or is it an issue we need to address through tuning? Was it observed in this one image only, or was it a recurring issue? And if so, is there a particular type of scene where it’s a problem? For example, is it only in a specific gain/lux range? Does it happen more in highlight/midtone/shadow regions? Does it happen before or after dual-camera fusion? Does it apply to only fine textures with subtle contrasts, or does it apply to well-defined edges?

Asking these questions can help us narrow down which components in the ISP we should try to fix—and once a fix is identified, we must test it across all types of pictures again to ensure that by fixing one thing, we didn’t inadvertently cause other kinds of images to break.

[/quotes]

The Essential will have its work cut out for it when it does launch eventually, as the current crop of smartphones take delightful pictures. I’m still thinking the ceiling of quaility has been reached with these cameras, but I’m far more interested in devices that can make up for the shortcomings of smartphones. Cameras which can take photos quicker, bring a subject into focus even faster and adapt to any environment.

Should be interesting to see if the Essential can live up to these expectations when it’s out eventually.

Last Updated: July 28, 2017