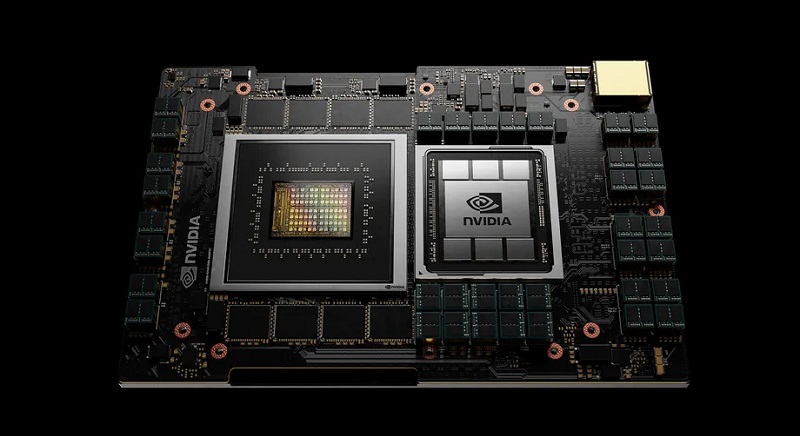

Nvidia may be a big player on the GPU space but they haven’t had much success on the CPU side of things which is probably why they’re dropping $40 billion in looking to acquire chip designer Arm. And while that acquisition has yet to be finalised, it seems that the company is planning to get back into the CPU game in a big way with the announcement of its new Arm-based Nvidia Grace chips, which are designed specifically for AI data centres.

Named after computing pioneer Grace Hopper, the new chips are looking to offer “10x the performance of today’s fastest servers on the most complex AI and high-performance computing workloads,” according to Nvidia. The company has made it clear before that it sees a big future in AI and wants to play heavily in this space, with this announcement a clear first step in the company achieving that and trying to beat its rival in this space.

Nvidia has revealed an specs for their new chip yet, though according to AnandTech says it is features a fourth-gen NVLink with a record 900 GB/s interconnect between the CPU and GPU. This is greater than the memory bandwidth of the CPU which means that NVIDIA’s GPUs will have a cache coherent link to the CPU that can access the system memory at full bandwidth, and allowing the entire system to have a single shared memory address space, rather than multiple smaller ones. Which to non-techies, essentially means that it process big numbers fast.

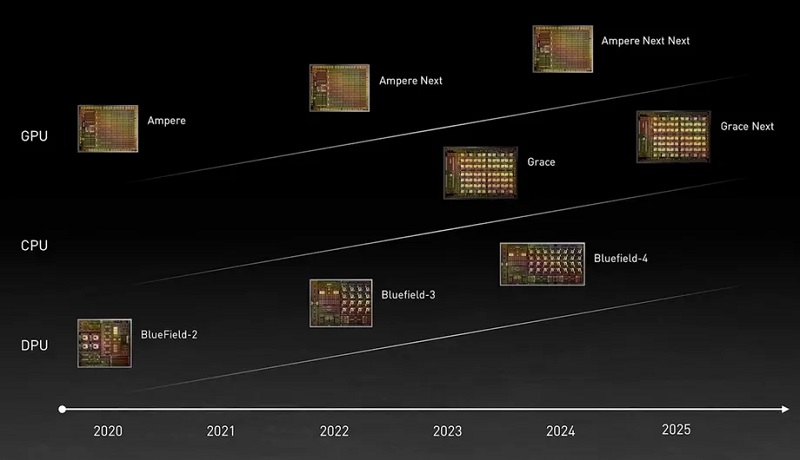

The chips are only scheduled for release in 2023, by which time their acquisition of Arm should hopefully be complete. It will be interesting to see what Nvidia and their new chips will be able to do in the AI space and as long as they don’t decide to change their name to Skynet somewhere along the way, it should only be good for the future of AI computing.

Last Updated: April 14, 2021